Adventures in Why

A Machine Learning Blog

Bob Wilson (he/him/his) is a Marketing Data Scientist at Meta Reality Labs, where he helps introduce the world to the future of technology. Prior roles include data science at Netflix, Director of Data Science (Marketing) at Ticketmaster, and Director of Analytics at Tinder. His interests include causal inference and convex optimization. When not tweaking his Emacs init file, Bob enjoys gardening, listening/singing along to Broadway musical soundtracks, and surfeiting on tacos.

Interests

- Causal Inference

- Convex Optimization

- Theoretical Statistics

Education

Recent Musings

Design-Based Inference and Sensitivity Analysis for Survey Sampling

In this note, we consider sampling from a finite population, without replacement and with unequal probabilities. We seek an estimate of the population mean of some characteristic.

Principal Stratification and Mediation

This post explores principal stratification and mediation analysis as tools for understanding causal effects, decomposing them into direct and indirect components. It covers scenarios like non-compliance, missing outcomes, and surrogate indices, highlighting the importance of assumptions such as no direct effects and no Defiers. Practical methods, including multiple imputation, regression, and matching, are discussed for estimating effects even when key quantities are unobserved. Real-world examples, like marketing lift studies and product funnels, illustrate the relevance of these techniques for addressing complex causal questions.

Interpretable and Validatable Uplift Modeling

In this note, we introduce a method for interpreting and validating the results of uplift modeling. We propose two novel strategies for controlling the Familywise Error Rate in this setting.

Modes of Inference in Randomized Experiments

Randomization provides the “reasoned basis for inference” in an experiment. Yet some approaches to analyzing experiments ignore the special structure of randomization. Simple, familiar approaches like regression models sometimes give wrong answers when applied to experiments. Approaches exploiting randomization deliver more reliable inferences than methods neglecting it. Randomization inference should be the first method we reach for when analyzing experiments.

Sensitivity Analysis for Matched Sets with One Treated Unit

Adjusting for observed factors does not elevate an observational study to the reliability of an experiment. P-values are not appropriate measures of the strength of evidence in an observational study. Instead, sensitivity analysis allows us to identify the magnitude of hidden biases that would be necessary to invalidate study conclusions. This leads to a strength-of-evidence metric appropriate for an observational study.

Projects

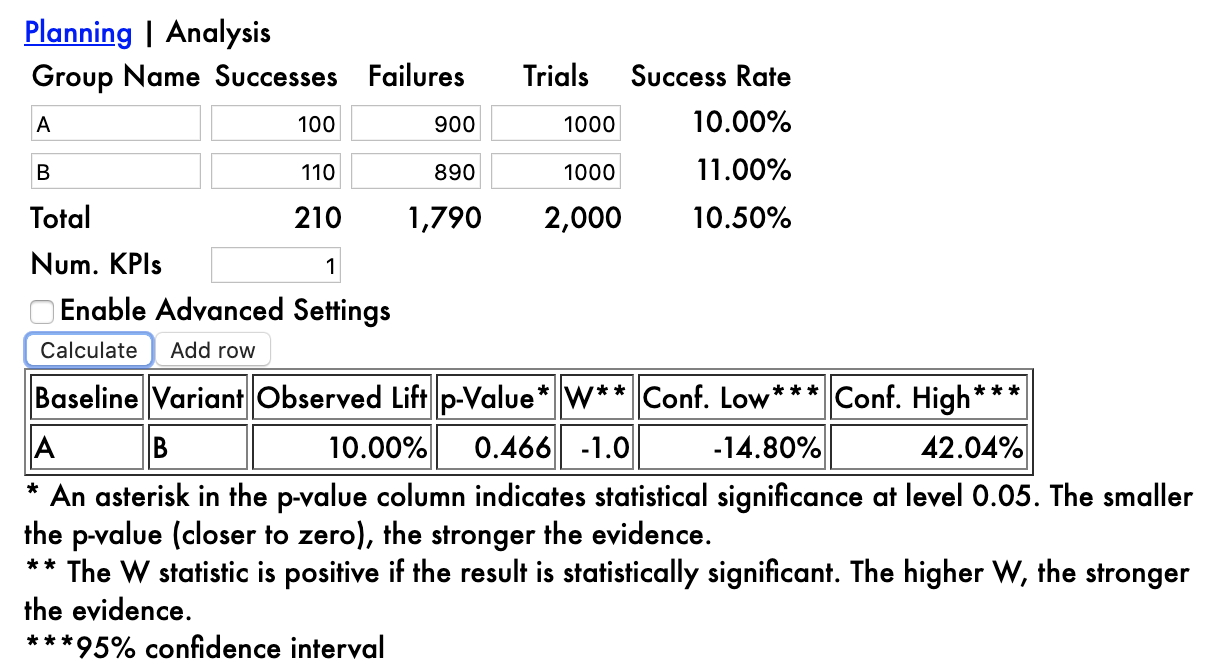

A/B Testing

Calculators for planning and analyzing A/B tests

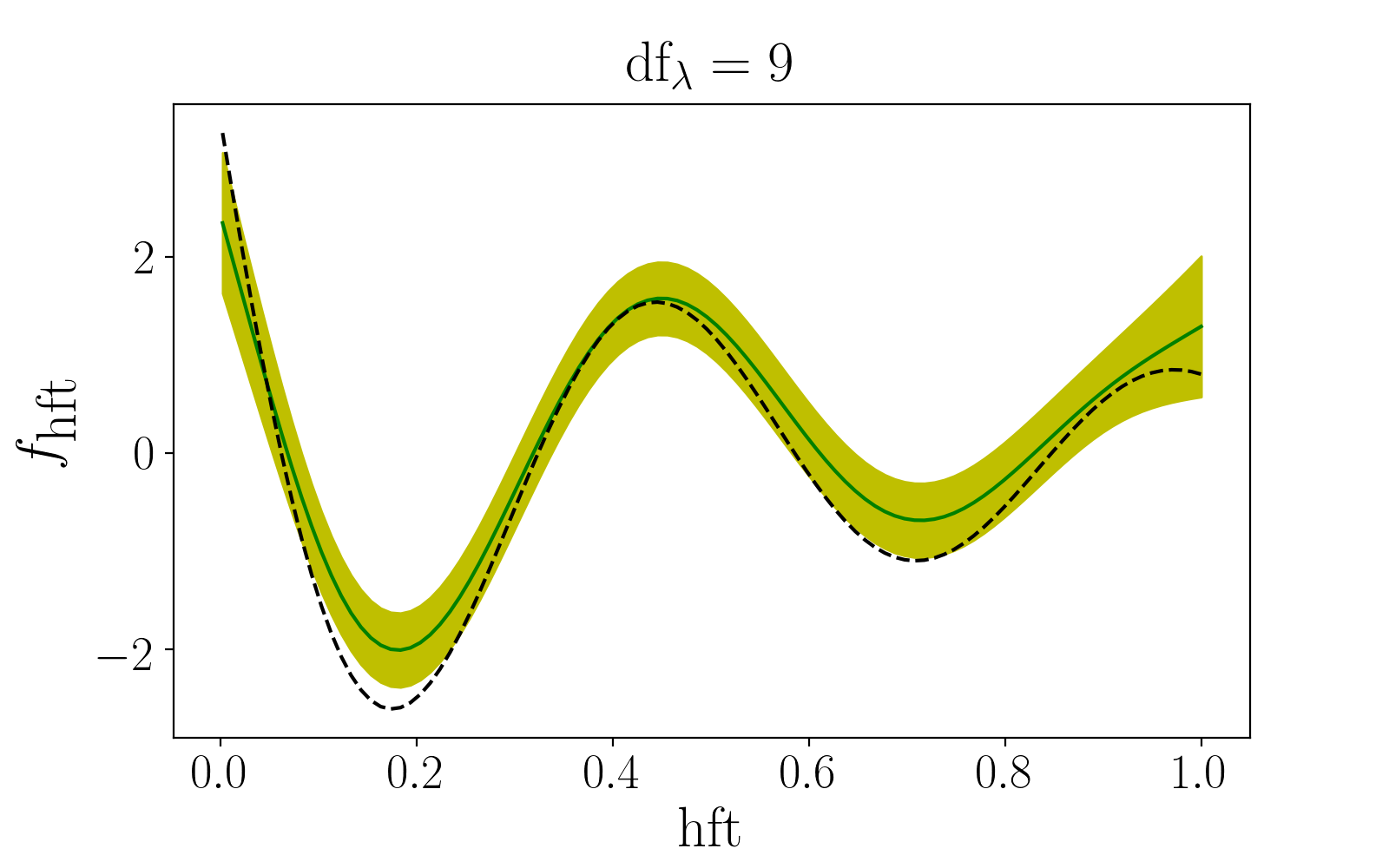

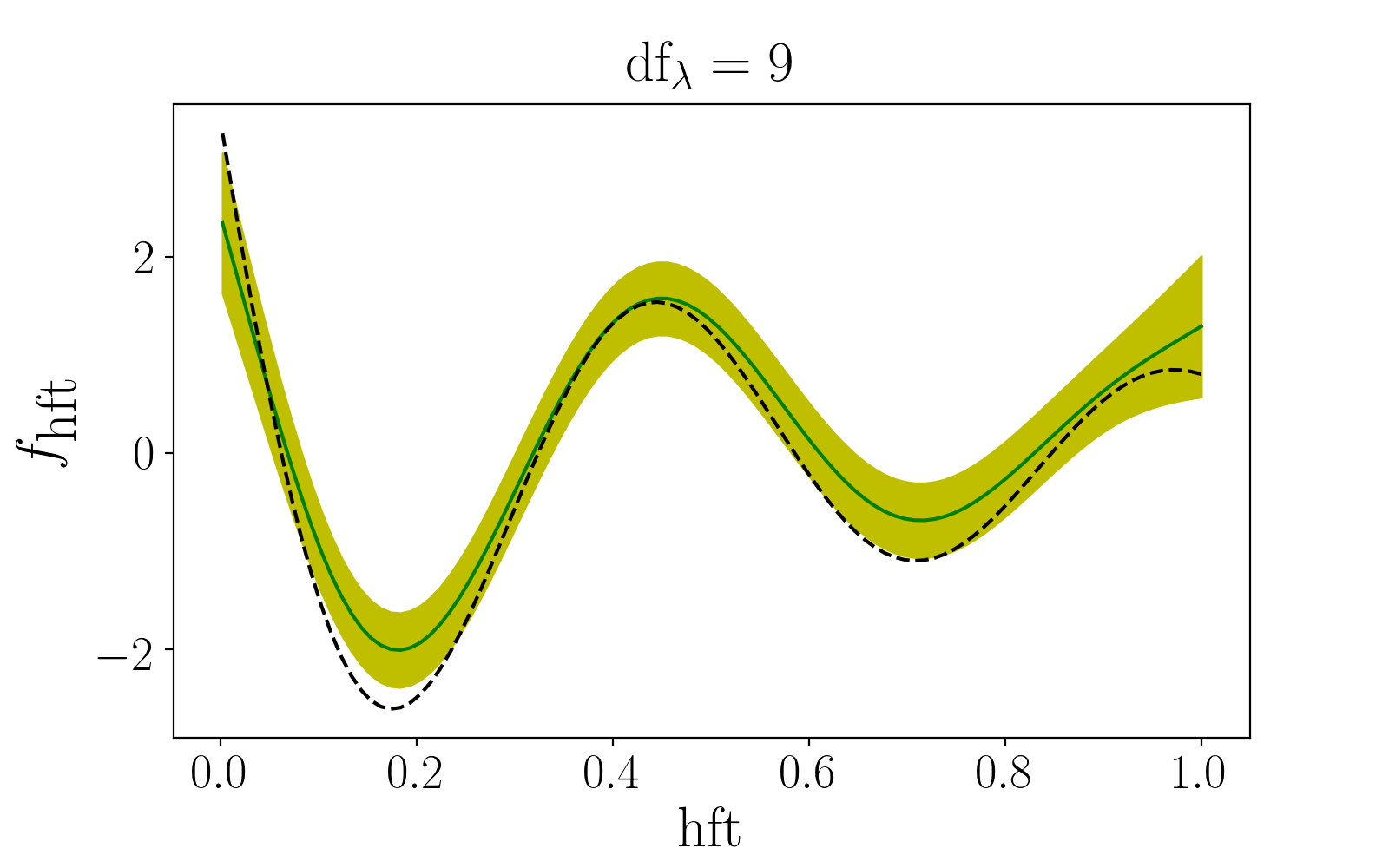

gamdist

Generalized Additive Models in Python

orbpy

Orbit Propagator in Python

Homebrew-Calc

Homebrewed Beer Calculator

Unit Parser

Unit Parser and Conversions in Python

Selected Papers

gamdist

Self-published

Over the last five years, gamdist has formed the backbone of my research agenda. While it is very much a work in progress, this paper summarizes everything I have learned about regression. I think it is most useful as a collection of references! Still to come: details on regularization and the alternating direction method of multipliers.

Robust Orbit Determination via Convex Optimization

Project Report, EE364B, Stanford University

We present a method of orbit determination robust against non-normal measurement errors. We approach the non-convex optimization problem by repeatedly linearizing the dynamics about the current estimate of the orbital parameters, then minimizing a convex cost function involving a robust penalty on the measurement residuals and a trust region penalty.

ThankBeer: A Beer Recommendation Engine

Paper for CS229 at Stanford University, Fall Quarter 2012.

We discuss a beer recommendation engine that predicts whether a user has had a given beer as well as the rating the user will assign that beer based on the beers the user has had and the assigned ratings. k-means clustering is used to group similar users for both prediction problems. This framework may be valuable to bars or breweries trying to learn the preferences of their demographic, to consumers wondering what beer to order next, or to beer judges trying to objectively assess quality despite subjective preferences.

Other Papers

Star Identification via Computer Vision Techniques

Paper for EE368 at Stanford University, Spring Quarter 2012.

We discuss a method for identifying stars in a photograph. We first filter the image using region labeling and locally adaptive thresholding. We then compare the stars in the image to a star catalog. This project turned out to be overly-ambitious for a class project, and we never really got it working.

A Discussion of Relativistic Phenomena and Construction of Spacetime Diagrams

A paper I wrote at the request of a curious friend

We discuss how the Special Theory of Relativity proceeds from the absence of an absolute definition of stationarity, as well as the observation that light travels at the same speed in all reference frames. Some interesting phenomena follow: two observers in relative motion cannot always agree on the length of an object, the time between two events, or even in what order the events occurred.

Recent & Upcoming Talks

Beyond A/B Testing: Getting More from Experiments

Nike Experimentation and Causal Inference Lunch & Learn Series

In my journey to improve the design and analysis of A/B tests, I have turned to the literature on observational causal inference. Along the way, I have learned several techniques to level up experiments. These techniques include tests of equivalence and non-inferiority, closed testing procedures, methods for non-compliance, and heterogeneous treatment effects.